Pytorch based implementation of a seq2seq machine translation model. This implementaton is based on two following papers.

- Ilya Sutskever, Oriol Vinyals, Quoc V. Le Sequence to sequence learning with neural networks

- Dzmitry Bahdanau, KyungHyun Cho, Yoshua Bengio∗ (2016) Neural machine translation by jointly learning to align and translate

The data for this project is a set of many thousands translation pairs from one language to another. Download the data from here (https://www.manythings.org/anki/)

Training was performed on Google Colaboratory platform which provides free access to GPUs. GPU Config -Tesla P100-PCIE-16GB having 2496 CUDA cores and 16GB GDDR5 VRAM.

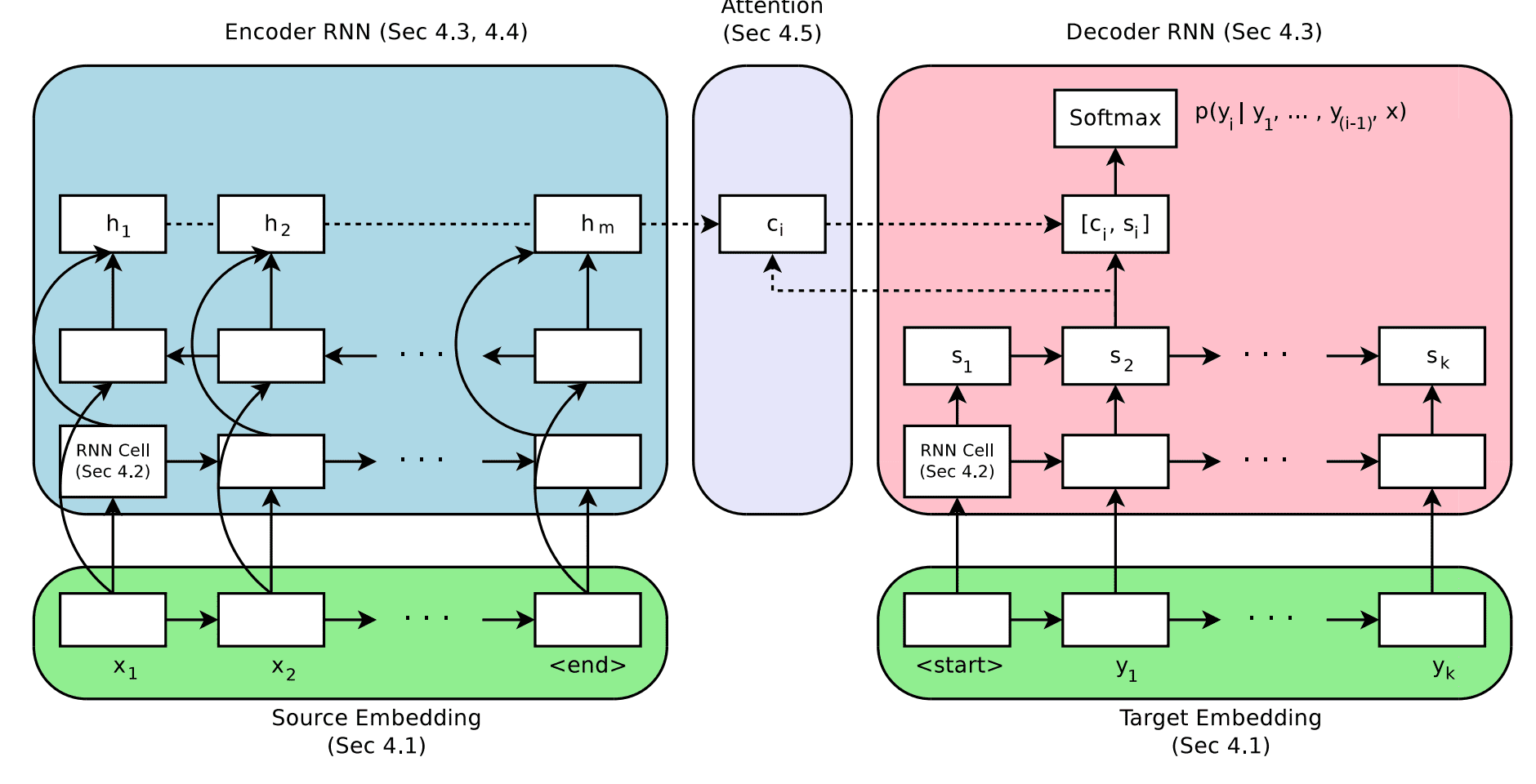

Encoder-Decoder with attention mechanism

Model variants (architecture of both encoder and decoder)

- Single layer GRU

- Single layer LSTM

- 3-layered GRU

- 3-layered LSTM

Clone the entire repo. (It includes the data a well)

git clone -l -s git://github.com/pashupati98/nmt-pytorch.git cloned-repo

cd cloned-repo

ls

Default setting is for French to English translation with single layer LSTM based encoder-decoder architecture having attention mechanism. Run everything (Training and Evaluation) with just one command.

python main.py

Each model performed more or less same.

1 : Model with single layer of GRU

Model Training process

Evaluation (Attention Map)

2 : Model with single layer of LSTM

Model Training process

Evaluation (Attention Map)

3 : Model with three layers of GRU

Model Training process

Evaluation (Attention Map)

4 : Model with three layers of LSTM

Model Training process

Evaluation (Attention Map)

Conclusion - This is a very simple architecture trained a small dataset. Yet, the model has learned pretty good for small sentences. It can be futher improved by training it on large corpus.