Shengcao Cao, Zijun Wei, Jason Kuen, Kangning Liu, Lingzhi Zhang,

Jiuxiang Gu, HyunJoon Jung, Liang-Yan Gui, Yu-Xiong Wang

ICCV 2025

[🔍Project Page] | [📄Paper] [📦Datasets and Models]

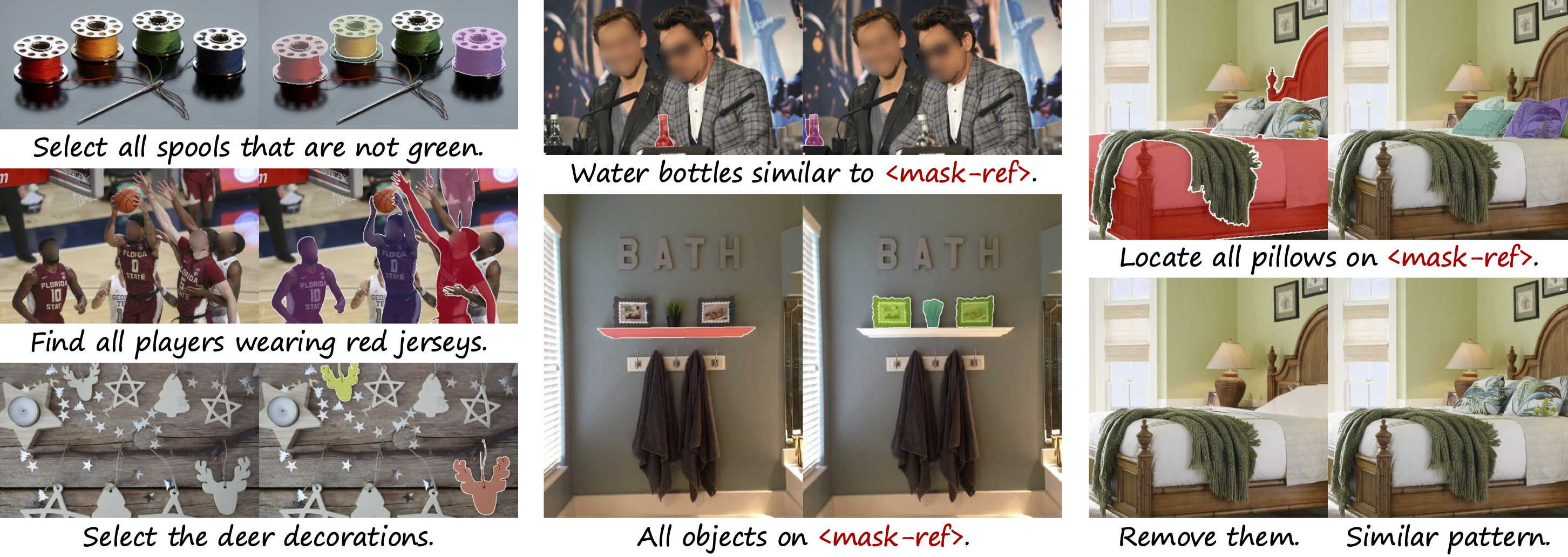

Segmentation from referring expressions & visual references enables more intuitive interactions.

To set up the environment, run the following commands:

# set root folder as current working directory (or modify as needed)

ROOT=$PWD

# create conda environment

conda create -n ras python=3.10 -y

conda activate ras

# install llava and related packages

cd $ROOT

git clone https://github.com/haotian-liu/LLaVA.git

git checkout c121f0432da27facab705978f83c4ada465e46fd

cd LLaVA

pip install -e ".[train]"

# after the following step, you may see "llava 1.2.2.post1 has requirement timm==0.6.13, but you have timm 1.0.3." but it's fine

pip install tensorboardX==2.6.2.2 pycocotools==2.0.7 open_clip_torch==2.24.0 opencv-python==4.9.0.80 numpy==1.26.4 timm==1.0.3

pip install flash-attn==2.5.8 --no-build-isolation

# install sam

cd $ROOT

git clone https://github.com/facebookresearch/segment-anything.git

git checkout dca509fe793f601edb92606367a655c15ac00fdf

cd segment-anything

pip install -e .

# clone ras repo

cd $ROOT

git clone https://github.com/shengcao-cao/RAS

cd RAS

We provide pretrained models on HuggingFace. Currently, we have released the RAS-13B-General checkpoint, which is trained on MaskGroups-2M and can be directly used for evaluation without any task-specific fine-tuning.

In order to run inference on customized samples or datasets, we also need to download segmentation model checkpoints to propose candidate masks, such as SAM.

For evaluation purposes, we first use a segmentation model (SAM or Co-DETR) to propose candidate masks, and then apply our RAS model to select from the candidates. We have preprocessed the candidate masks for evaluation datasets to facilitate quick evaluation.

To perform RES and GRES evaluation, download our processed (g)RefCOCO(+/g) datasets here. For ORES evaluation, download our processed MaskGroups-HQ dataset here.

The data folder should be set up as follows:

data/

|-- coco/

| |-- train2014/ # download from http://images.cocodataset.org/zips/train2014.zip

|

|-- refcoco/ # processed data with masks proposed by our retrained Co-DETR, download from https://huggingface.co/datasets/Shengcao1006/RAS-refcoco

| |-- grefcoco_groups_testA_cd.json

| |-- ...

| |-- refcoco_groups_val_cd.json

|

|-- MaskGroups-HQ/ # processed data with masks proposed by SAM, download from https://huggingface.co/datasets/Shengcao1006/MaskGroups-HQ

| |-- images_resized/

| |-- maskgroups_hq_val_sam.json

After setting up the data folder, use the commands specified below to run evaluation on different tasks and datasets.

- Referring Expression Segmentation (RES) on RefCOCO/RefCOCO+/RefCOCOg:

eval_res.sh. - Generalized Referring Expression Segmentation (GRES) on gRefCOCO:

eval_gres.sh. - Omnimodal Referring Expression Segmentation (ORES) on MaskGroups-HQ:

eval_ores.sh.

Using the checkpoint RAS-13B-General, we expect to reproduce the numbers of RAS without task-specific fine-tuning in our paper.

We are preparing the checkpoint, training data, and documentation. Coming soon!

We introduce several datasets for training and evaluation.

- MaskGroups-2M: A large-scale dataset with 2 million mask groups, converted from MS-COCO, LVIS, Visual Genome, and RES/GRES. It is used for training our model.

- MaskGroups-HQ: A high-quality dataset with 100K mask groups, annotated based on the EntitySeg dataset. It is used for finetuning and evaluation on our new task, omnimodal referring expression segmentation (ORES). We are only able to release the evaluation set of MaskGroups-HQ.

- LLaVA-Pretrain-Mask: A modified version of LLaVA-Pretrain, where we use SAM to propose segmentation masks and change the visual tokens from patches to masks. It is used for pretraining our mask projector.

All of our training and evaluation datasets are formulated as JSON files. Each JSON file is a list of samples, and each sample contains these keys:

id: The unique ID for the sample within the dataset.image: The corresponding image file. It needs to be joined with an image folder path.conversations: Question and answer formulated in a conversation format, following LLaVA. An example looks like this:Here,[ {'from': 'human', 'value': '<image>\n<mask_pool>\nSelect a mouse beside a laptop and a little boy sitting far from us is looking at a laptop.'}, {'from': 'gpt', 'value': '<mask_group>'} ]<mask_pool>indicates where to insert the candidate masks, and<mask_group>indicates where to insert the target mask group.mask_pool: The pool of candidate masks. It is a list of RLE-encoded masks. An example:[ {'size': [480, 640], 'counts': 'W^]35j>1N3N2N1O1O1O1N2M4N1O1O1O1O1O2N1O1O101N1O1O1O100O2N1O1iNSOZDn0c;WOZDj0d;[OWDg0h;^OSDc0k;BQD?n;FlC=R<Y100O1O100O10000O10000O100O10000000000001O0000001O1O001N2O1O1O2O1N3M6Kb0^O1O0O101N2O1N2O0O2O0O10000O2O00000O100O101N10000O1O1O1O100O2N100O1O100N3N1O2N1O2M2O2M4J_bY4'}, ... ]mask_group: The target mask group for training. It is a list of ID lists. Each ID list corresponds to one<mask_group>in the conversation. An example ofmask_group:which means the 0-th and 28-th masks should be included in the group.[[0, 28]]group_type: A string indicating the mask group type (e.g., category, attribute, position).with_ref: 0 or 1, indicating whether there is any<mask_ref>in the conversation.- (Optional, when

group['with_ref'] == 1)mask_ref: A list of mask ID lists. Each ID list corresponds to one<mask_ref>in the conversation. An example looks like this:which means the first[[0], [2]]<mask_ref>should be replaced by the 0-th mask, and the second<mask_ref>should be replaced by the 2-nd mask. - (Optional)

gt_mask_pool: The pool of "ground-truth" candidate masks. Note thatgt_mask_poolmay not be identical to ourmask_pool, because during inference, we should use a segmentation model to propose candidates (mask_pool), instead of directly using the human-created mask annotations (gt_mask_pool).gt_mask_poolfollows the same format asmask_pool. - (Optional)

gt_mask_group: The target mask group withingt_mask_pool. Same format asmask_group. - (Optional)

pred_mask_group: The model-predicted mask group. The IDs are formask_pool, notgt_mask_pool. Same format asmask_group. - (Optional)

pred_mask_scores: The model-predicted confidence scores for all candidates. This is a list of float numbers, with the same length asmask_pool.

We can use visualize_group.py to visualize mask groups in the datasets or evaluation results. See more details by running python smart_seg/annotation/helper/visualize_group.py --help.

We build our codebase upon LLaVA, SAM, and Co-DETR. We also got inspirations from LISA, GLaMM, and Cambrian-1. Many thanks to the authors for open-sourcing their code and models!

If you find our work useful in your research, please consider citing:

@inproceedings{cao2025refer,

title={Refer to Any Segmentation Mask Group With Vision-Language Prompts},

author={Cao, Shengcao and Wei, Zijun and Kuen, Jason and Liu, Kangning and Zhang, Lingzhi and Gu, Jiuxiang and Jung, HyunJoon and Gui, Liang-Yan and Wang, Yu-Xiong},

booktitle={ICCV},

year={2025}

}